Quantitative Stock Forecasting Engine

LSTM & Attention-based model with real-capital validation

Role: ML Researcher

This project focuses on the development and deployment of a specialized time-series forecasting engine designed to predict equity price volatility. Unlike purely theoretical academic projects, this system was validated with real capital in a live market environment.

Working in a small team, we created a full-stack solution from raw data ingestion to signal generation—demonstrating the ability to bridge the gap between “test-set accuracy” and real-world performance.

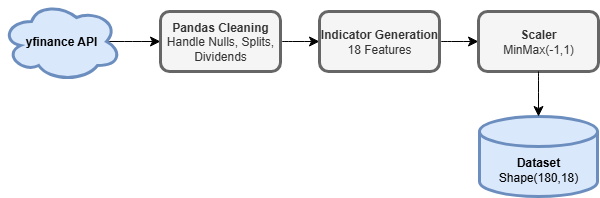

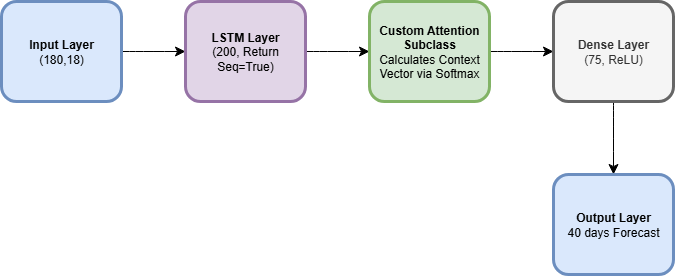

Left: The custom ETL pipeline. Right: The LSTM/Attention model architecture.

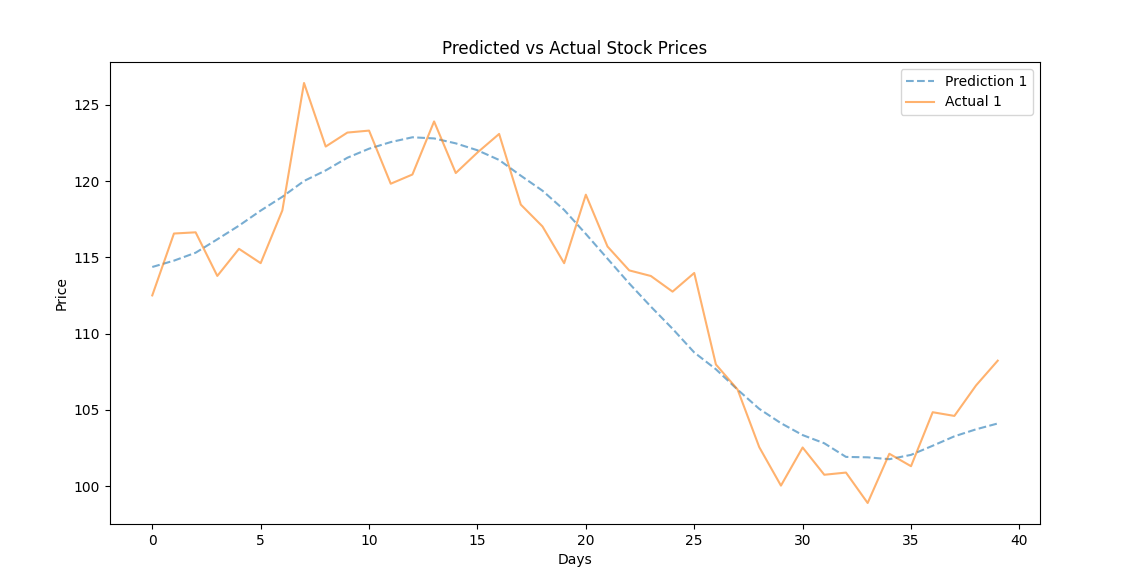

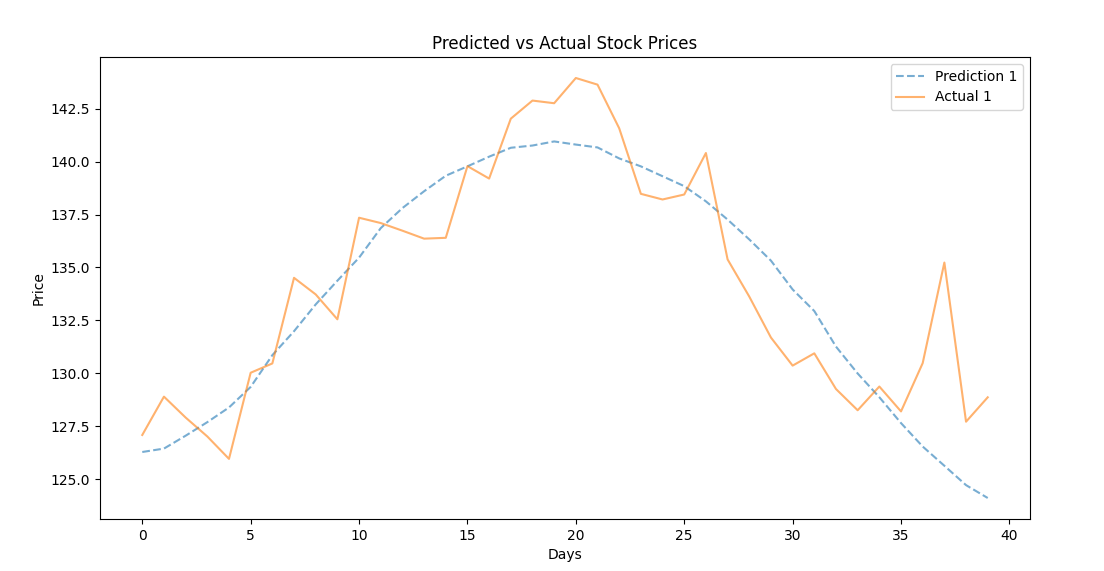

Real-World Performance

- Quantitative Success: The model achieved a < 2% Mean Absolute Percentage Error (MAPE) on the 40-day prediction window, a result considered exceptional by industry standards for mid-cap equities.

- Capital Efficiency: In a 2-month live-fire test, the strategy generated a 560% Return on Investment (ROI), scaling a test account from 300 to 2,000 dollars. Achieving this aggressive return, we trained the forecaster to predict 10 days in the future to execute quicker options trades instead of longer term holds.

Model Validation: The engine (blue dashed) tracking actual price movement (orange solid) over a 40-day forecast window. Note the model's ability to smooth out noise while capturing the macro trend.

The Data Pipeline

To support the model, I engineered a robust data pipeline capable of handling noisy financial data.

- Data Gathering: Used yfinance to gather 10 years of daily time-series data for 50+ target equities.

- Cleaning: Implemented automated cleaning protocols to handle missing ticks, stock splits, and dividends, ensuring data integrity before training.

- Feature Engineering: Enriched the dataset with 18 distinct predictive indicators (technical and statistical) to expand the feature space for the neural network.

- Normalization: Normalized the dataset with a MinMax scaler from -1,1 to prevent higher weight on larger features.

Technical Architecture

The core of the system is a custom deep learning model built with TensorFlow and Keras.

- Custom Model Architecture: I engineered a custom Attention Layer subclass rather than using off-the-shelf components, integrating it with LSTM networks to mathematically weigh the relevance of historical volatility against current price action.

- Optimization & Training: Implemented dynamic Learning Rate Scheduling and Early Stopping callbacks to optimize convergence and prevent overfitting on noise.

- Feature Engineering: The system aggregates over a decade of daily time-series data, generating 18 distinct predictive indicators (including VWAP, Aroon, and Bollinger Bands) to enrich the feature space.